Data doesn’t exist in a vacuum, which is why Kogod’s Crown Prince of Bahrain Chair in International Finance, Robin Lumsdaine, insists that her students learn about the world and consider multiple factors when making decisions. “In my class, we analyze real world evidence,” she says. “It is so important to be able to evaluate evidence to form our own opinions.”

Few know more about uncovering the meaning behind the numbers than Lumsdaine. Her international banking background working for the Federal Reserve and Deutsche Bank heavily informs her teaching, research, and work as an econometrician.

“International finance is basically all about managing risk associated with currency fluctuations,” says Lumsdaine. “What I’m interested in research-wise are things that are policy-relevant. A lot of my research relates to real-world applications.”

So when she was offered the opportunity to evaluate data from a randomized controlled trial involving the delivery of education in rural Guinea Bissau, Lumsdaine accepted without hesitation.

“My involvement in the project actually came about because I was aware of the great work that this nonprofit Effective Intervention is doing in very poor, developing countries,” says Lumsdaine. “They asked me if I wanted to be the responsible statistician on the analysis of the data that had come in from their Guinea Bissau intervention, and I agreed immediately.”

Lumsdaine’s data analyses revealed that Effective Intervention’s non-governmental primary schooling helped children in poor, remote regions dramatically increase their literacy and math skills.

“The main thing we were looking at in this study was educational attainment as assessed by Early Grade Reading Assessment (EGRA) and Early Grade Mathematics Assessment (EGMA) test scores,” Lumsdaine says. “We chose them to serve as our primary outcome because they are particularly sensitive in measuring small differences in ability among children who have very low levels of learning, such as those in many parts of our trial area.”

In addition to the primary outcomes of interest, Lumsdaine and coauthors explored other variables that may have affected how well a student performed, as well as possible knock-on benefits.

“We looked at spillover effects to siblings,” Lumsdaine explains as an example. “Did the siblings of the kids that were in the intervention do better than the kids that were in the control group? Did it matter whether the parents knew any Portuguese, which is the language the test was administered in? And there were a whole bunch of other secondary analyses.”

In February, the paper was accepted for publication in the Journal of Public Economics. The experiment and analysis was also recently recognized by Arnold Ventures’ Evidence-Based Policy team for its scientific integrity. “The analysis plan was registered before I ever saw the data,” says Lumsdaine, noting that meant that the team didn’t know what the findings would be. “It takes such a long time to conduct the research. You wait four years, and then let’s say it turns out you find nothing,” says Lumsdaine. “With this particular experiment, it’s important to emphasize that there wasn’t any preconception about how the analysis would turn out. You wouldn’t conduct an experiment if you didn’t hope it would have an effect, but nobody had any idea of how big the gains would be. I mean, they’re massive!”

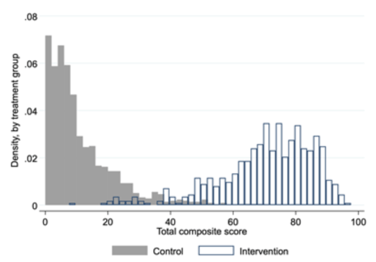

In the study, the children in the control group earned a mean composite (combined reading and math) test score of 11.2 percent; the children in the intervention scored a mean of 70.5 percent. The dramatic results also were present when the children’s math and reading scores were examined separately. Figure 1 shows how large the difference was by illustrating the entire distribution of tests scores for children in the intervention compared with those in the control group.

But the importance of this study isn’t just finding out that an intervention worked—it’s knowing that in the future, similar interventions can be expanded within Guinea Bissau or replicated in other countries. The Effective Intervention team is continuing its work.